BY Paul Riopel & Esme Bale

Educational institutions are dealing with a new challenge: ChatGPT, an artificial intelligence (AI) chatbot that poses a threat to academic integrity.

“There’s lots of concern from professors. Some have even expressed with me their worries about being able to differentiate work done by AI and work done by students,” says Philippe Caignon, an academic code of conduct administrator at Concordia University.

“During the pandemic, even when prohibited, students would work together, look things up online, or hide things around their computers,” says Caignon.

ChatGPT’s homepage. Photo by Paul Riopel.

Now AI is providing a new way for some to cheat. Some educational institutions have already banned the use of it altogether, including the New York City Department of Education blocking the chatbot from its school networks.

The technology in question, ChatGPT, became the fastest-growing consumer application in history, reaching 100 million monthly active users, just two months after its public release.

Although AI has been trending for some time now—such as the many deep fake videos trending on social media of famous personalities like Donald Trump and Joe Biden arguing while playing Minecraft—ChatGPT took the world by storm because of its ability to produce human-like responses to almost any of the user’s inputs.

As the technology behind deepfakes drastically improves, deepfakes could even have a political impact. Video by Esme Bale.

Despite the technology’s capabilities, Assistant Professor at Concordia Amélie Daoust-Boisvert, chooses not to get caught up in the hype.

“It is an opportunity for cheating, yes, but so are any other tools,” she says.

Caignon agrees that ChatGPT doesn’t mean the end of academic integrity.

“It’s the new thing, but it won’t be the last,” he explains. “We have to understand it, and how to use it, rather than banning it.”

In a recent list of teaching resources released by Stanford University, the higher education institution delved into ways that a chatbot could be used as a teaching tool while acknowledging both the pros and cons of such a technology.

“If it can be used to learn, then it’s a good thing,” states Caignon. “You can use it to learn geography, for example, but if you use the machine to do all of your work, you not only won’t actually learn anything, but you’ll lose the money you spent on school.”

Inside Concordia’s downtown Webster library. Photo by Paul Riopel.

“I think the threat for higher education comes in not potentially adapting to this new technology,” says Connor Wright, a member of the Montreal AI Ethics Institute (MAIEI).

Still, he says students and educators should remain skeptical and vigilant around the chatbot.

When a student does try and use a chatbot to do their work for them, professors now have tools at their disposal to help catch them. One example is Turnitin, a plagiarism detection service used by schools, which recently developed an AI detection software that can detect chatbots such as ChatGPT in submitted assignments.

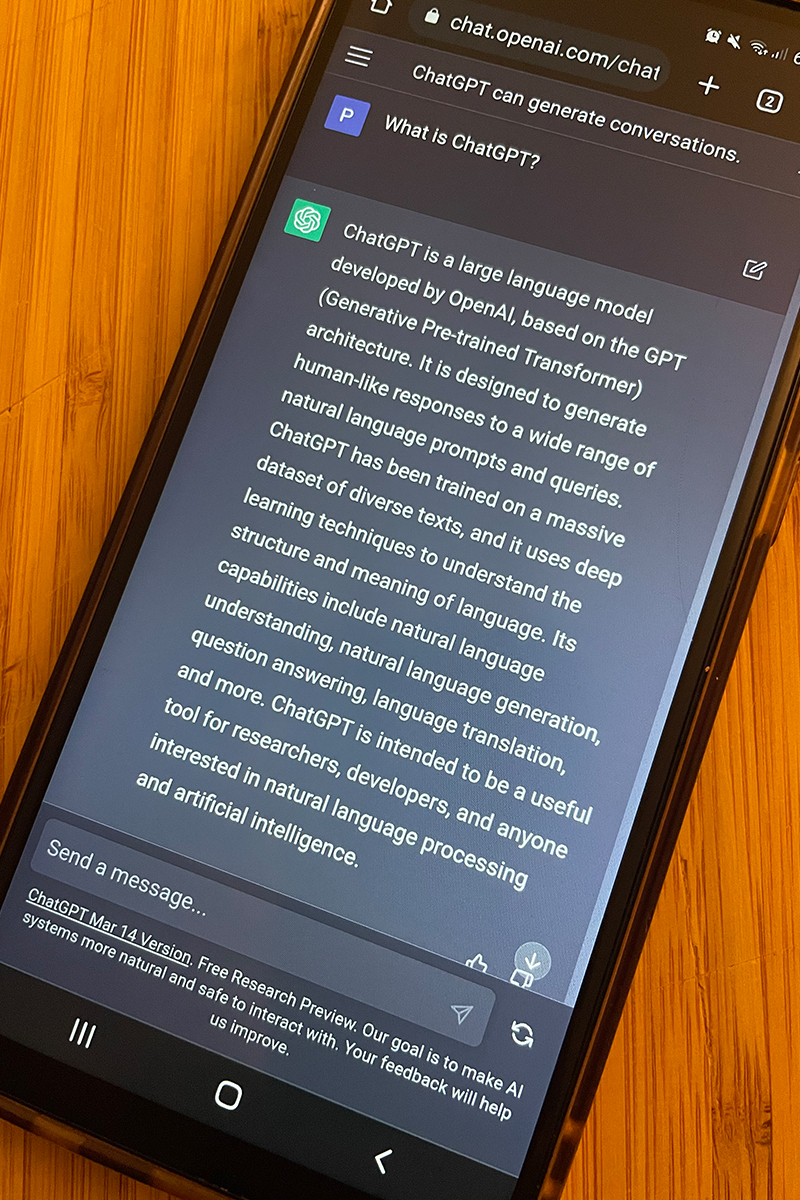

“Interestingly enough, OpenAI has not only ‘created the problem’, but also created the solution to it as well,” Wright says. Sure enough, the company has released their own AI text detection model which is still improving.

ChatGPT’s response to the question: What is ChatGPT? Photo by Paul Riopel.

“The next step will probably be about using other modulations of data such as videos and audio. GPT-4 is now using images which was not the case before,” explains Gauthier Gidel, an assistant professor at Université de Montréal (UdeM) and a core faculty member of Mila.

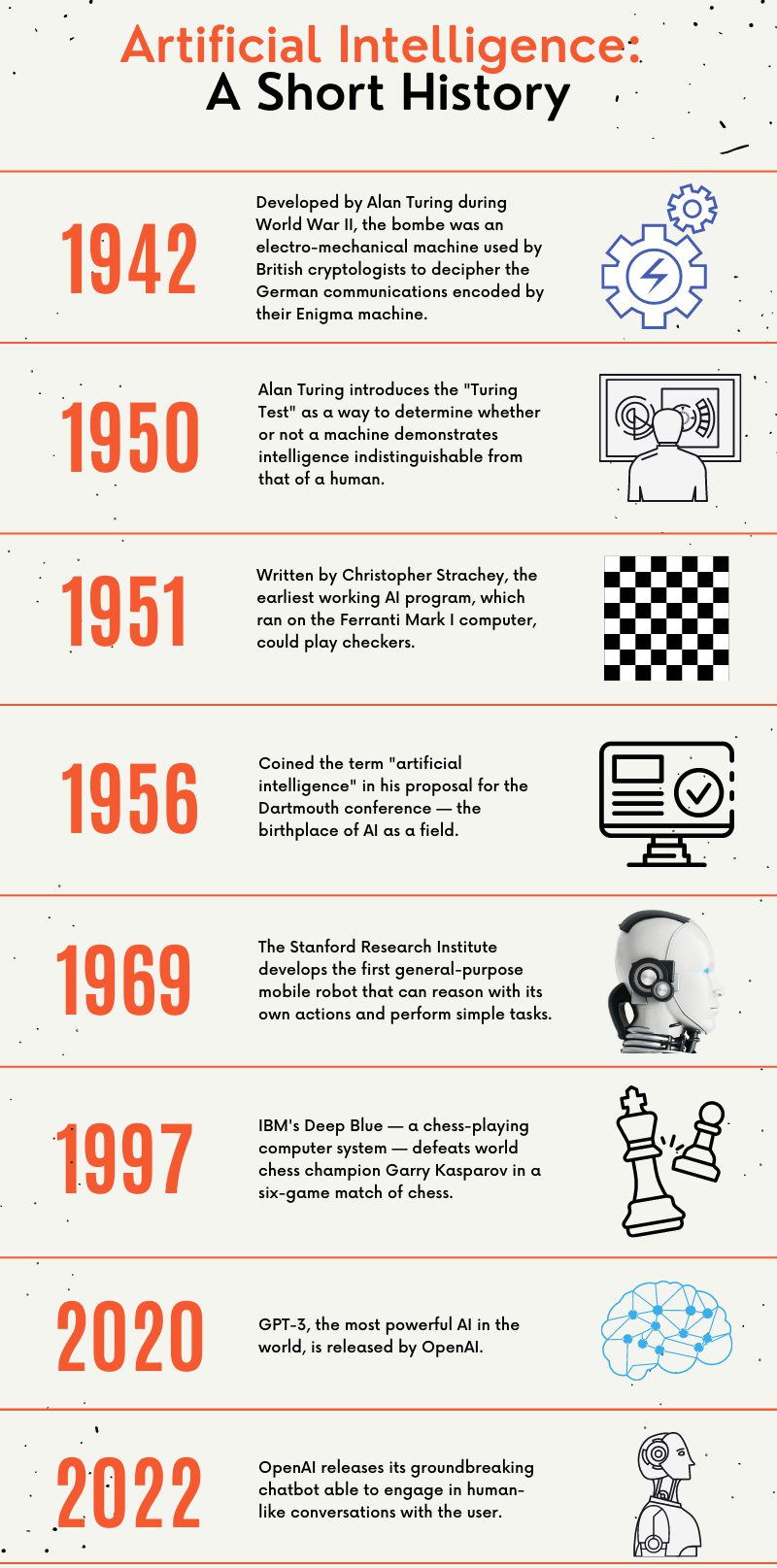

With speculation around the power of future releases, Caignon believes that universities will need to make some changes to their policies and academic codes of conduct.

“The higher levels of the university are already thinking about it. There was a meeting with a subcommittee of associate deans, code admins, assistant code admins, and student representatives to discuss the course of actions available,” says Caignon. “Either they’ll have to constantly update them as AI improves, or set guidelines wide enough that they account for technology not yet even invented,” he added.

Timeline of innovations in artificial intelligence. Infographic by Paul Riopel.

While the situation is continuously developing, the university has seemingly veered away from taking a heavy handed approach. For now, Concordia is working to help staff members understand ChatGPT and offer tools to help them detect it.

They have created a webpage on ‘AI in the classroom’ which provides faculty members with, among other things, recommendations for pedagogical use of AI including ways to mitigate its misuse, explains Concordia’s University Spokesperson Vannina Maestrcci.

“They’re also facilitating a monthly meeting of a “ChatGPT Faculty Interesting Group” which allows faculty members to exchange practices and discuss applications as well as impacts the technology may have on the classroom,” she adds.

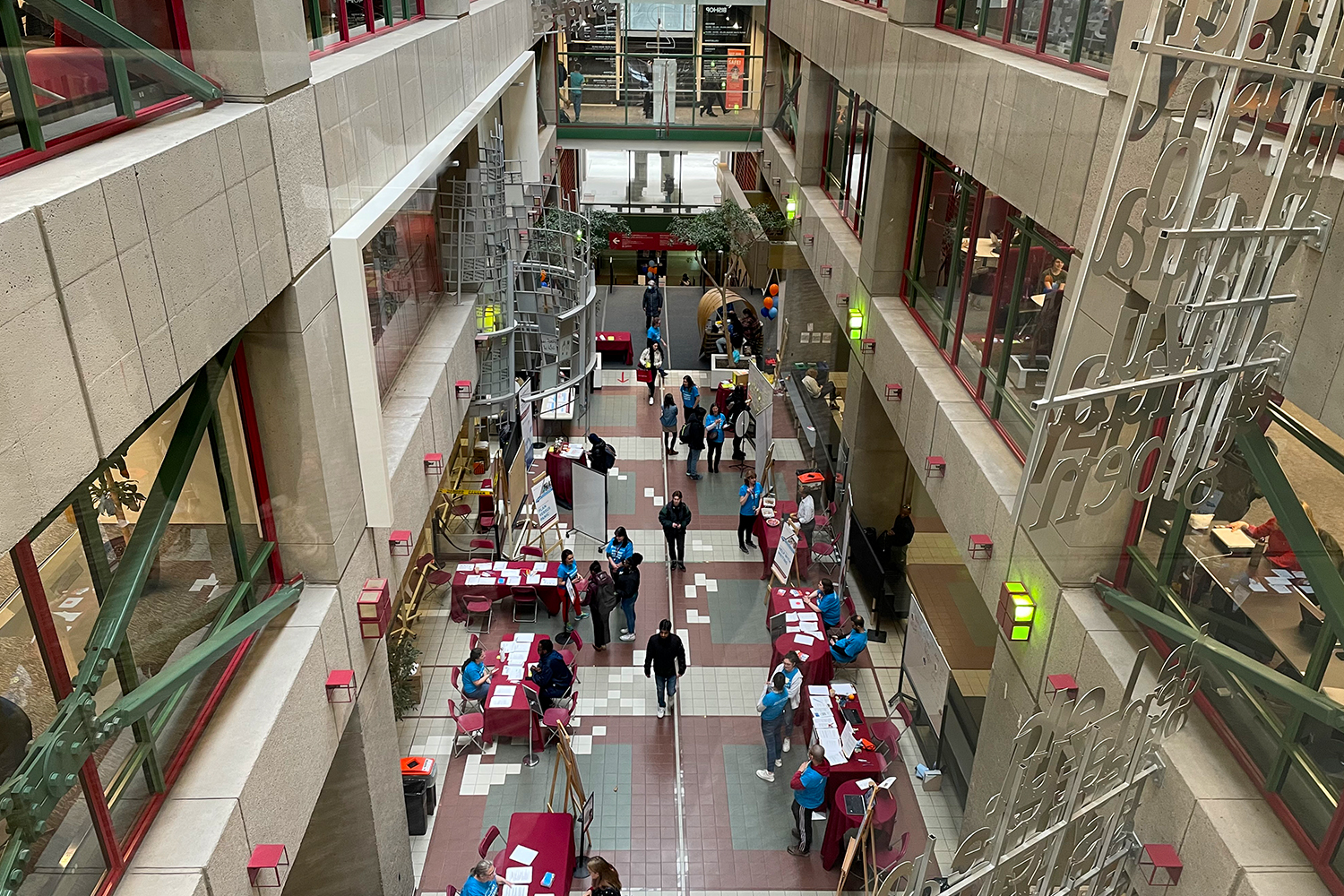

Concordia University’s downtown Webster library is bustling with students. Photo by Paul Riopel.

While there’s still much uncertainty surrounding programs like ChatGPT, with some viewing it as a tool while others a method of cheating, what is sure is that this current wave of artificial intelligence will be bringing big changes to higher education in the years to come.